, a n that minimize the sum of squared distances between each data point: (x i, y i), and the corresponding point is predicted by the polynomial regression equation is: (x i, a 0 + a 1 x i. When applying the least-squares method you are minimizing the sum S of squared residuals r. To find the coefficients of the polynomial regression model, we usually resort to the least-squares method, that is, we look for the values of a 0, a 1.

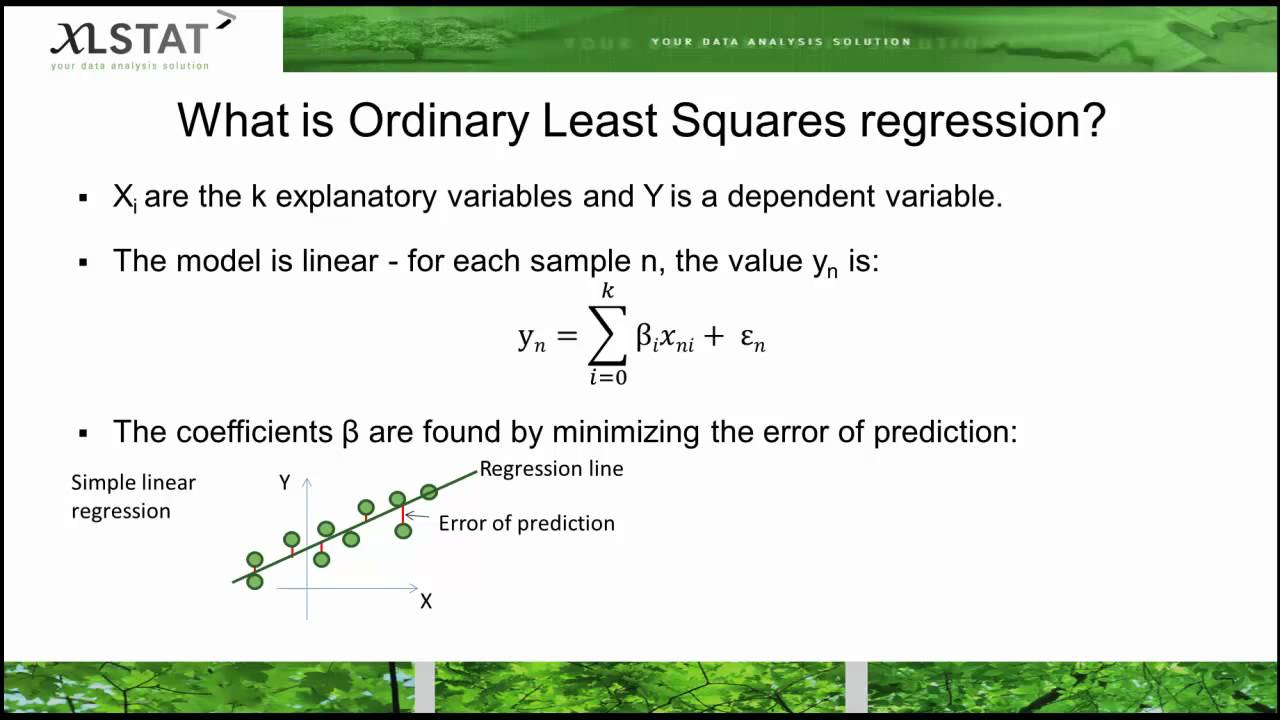

The differences between the regression line and the actual data points are known as residuals. So we’ll stick with a linear model for now What Are Residuals? Addressing this problem is one of the central problems in machine learning and is known as the bias-variance tradeoff. Second, the more closely you fit a model to a specific data distribution, the less likely it is to perform well once the data distribution changes. First of all, non-linear functions are mathematically much more complicated and thus more difficult to interpret. In fact, this is what more advanced machine learning models do. Of course, we could also apply a non-linear predictive model which could fit the data perfectly and go through all the data points. a visual demonstration of the linear least squares method This is known as the least-squares method because it minimizes the squared distance between the points and the line. In the example plotted below, we cannot find a line that goes directly through all the data points, we instead settle on a line that minimizes the distance to all points in our dataset. This is where residuals and the least-squares method come into play. This regression equation calculator with steps will provide you with all the calculations. Step 2: Type in the data or you can paste it if you already have in Excel format for example. Unless all the data points lie in a straight line, it is impossible to perfectly predict all points using a linear prediction method like a linear regression line. The steps to conduct a regression analysis are: Step 1: Get the data for the dependent and independent variable in column format. One of the simplest predictive models consists of a line drawn through the data points known as the least-squares regression line. Machine learning is about trying to find a model or a function that describes a data distribution. If you want a more mathematical introduction to linear regression analysis, check out this post on ordinary least squares regression. The focus is on building intuition and the math is kept simple. In this post, we will introduce linear regression analysis.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed